[Update 2018-06-15: A colleague pointed out a link to similar work, but written in Go.]

It all started innocently enough, but like most "15 minute ideas" it ended up taking me the majority of a day. I wound up in a cool place, though, at the intersection of Big Data, Python and Infosec. I also had a lot of fun in the process, which of course is the most important thing.

The Setup

Yesterday I came across a blog post by Troy Hunt (

@troyhunt) called

Enhancing Pwned Passwords Privacy by Exclusively Supporting Anonymity. Troy, of course, is the force behind

https://haveibeenpwned.com, a site famous for collecting password dumps from various data breaches and allowing users to find out if their account credentials have been compromised. Somehow, though, I missed that they offer a related service: given a specific password (rather, the SHA1 hash of the password), they can tell you how many times that password has been found in any of the breaches they track. They currently have over 500,000,000 unique passwords, which is quite impressive! Other sites are starting to use this service as a quality check when it comes time for users to change their passwords. If the new password is present many times in the HIBP database, assume it's not a good password and ask the user to choose something else. HIBP exposes a REST API that makes this lookup simple and easy.

So far, that's pretty straightforward, but Troy introduced another wrinkle. As a service provider,

he doesn't want to know everyone's password. Not even the hash. So HIBP uses Cloudflare's

k-Anonymity protocol. Basically, you can submit just the first few characters of the password's SHA1 hash, and HIBP will send you back a list of all the hashes with that prefix. You're on the hook for searching that small list, so only the caller ever knows the full hash, let alone whether it was present in the database.

As a method for checking existence in a large set without divulging the actual element you're testing, it's pretty good. It works, and is pretty straightforward to understand and implement.

The Problem

Troy's post originally caught my eye because I'm working with a set of plaintext passwords for which I'd like to do ongoing data enrichment. It occurred to me that presence in the HIBP database might be a very useful datapoint to track. For small sets or one time use, I could have just used the HIBP API, but I plan to run this on a continuous basis and don't want to beat up on their server. When I saw that they publish the

list of hashes for download, though, I had an idea.

What if I could check a password's existence in the list without having to call their API every time and yet also without having to search the entire 29GB list every time I wanted to do a check? And I need to do it a lot, so it really needs to be quick.

As it turns, there's a perfect tool for this:

the Bloom filter.

Bloom Filters to the Rescue!

A Bloom filter is a data structure that allows you to quickly check to see if a given element is a member of a set, without disclosing or storing the actual membership of the set. That is, given a populated Bloom filter, you can test a specific element for membership, but you can't know anything about the other potential members unless you test them also. Populating a Bloom filter with a large number of elements can be relatively slow, but once it's ready for use, tests are very quick. They are also extremely space efficient: my populated filter for the 29GB HIBP hash list is only ~860MB, or about 3% of the size of the original dataset.

How do They Work?

Imagine a bloom filter as a set of independent hashing functions and an array of bits. We'll talk about how many different hashing functions you need and large the bit array should be later, but for now, just assume we have a single hash function that outputs fixed-sized hashes of, say, 10 bits. That means the bit array for this example would be 10 bits long.

To add an element to the Bloom filter (say, the string "element1"), run it through the hash function and notice which bits in the output are set to 1. For each of these, set the corresponding element in the bit array to 1 also. Since you only have one hash function and one member so far, the filter's bit array is exactly equal to the output of the hash of "element1". Do this hash/bit-setting operation again for each additional element in your dataset. In the end, each bit in the filter's array will be set to 1 if any of the elements' hashes had the corresponding bit set to 1. Basically it's a bitwise OR of all the hash outputs.

Testing to see if a given element is in the set of members is straightforward in our trivial example: Simply hash the candidate member with the same function and notice which output bits are set to 1. If each and every one of those bits are also 1 in the filter's array, the candidate is part of the set. If any of the output bits are still set to 0 in the filter's array, though, the candidate was clearly never processed by the hash function, and thus it's not a member of the original set.

Bloom Filter Parameters

Of course, things are slightly more complicated than that. Hash functions experience collisions, especially with tiny 10 bit outputs. In our example, we'd actually get a ton of false positives (the filter says the candidate is a member of the set when it's really not). However, with a Bloom filter, you can never get a false negative. If the filter says the candidate is not a member of the set, it definitely isn't.

To get around the false positive (FP) problem, you can tune the Bloom filter with two parameters: the number of independent hash functions you want to use on each member and the size of the bit array. Hashing the same element with multiple algorithms and storing each result in the bit array is a good way to avoid FPs, since the more algorithms you use for each test, the less likely collisions are to occur between all of them. Expanding the size of the bit array helps, too, because it effectively expands the number of unique outputs for each hash, meaning you can store more values because the collision space is larger.

There's some nice math behind choosing the optimum number of hash algorithms (usually notated as

k) and the best size for the bit array (usually notated as

m). The other thing you need to know is the number of elements in the set (which is called

n). Fortunately, there's a

easy chart online, which I reproduce in part here:

Table 3: False positive rate under various m/n and k combinations.

| m/n | k | k=1 | k=2 | k=3 | k=4 | k=5 | k=6 | k=7 | k=8 |

| 2 | 1.39 | 0.393 | 0.400 | | | | | | |

| 3 | 2.08 | 0.283 | 0.237 | 0.253 | | | | | |

| 4 | 2.77 | 0.221 | 0.155 | 0.147 | 0.160 | | | | |

| 5 | 3.46 | 0.181 | 0.109 | 0.092 | 0.092 | 0.101 | | | |

| 6 | 4.16 | 0.154 | 0.0804 | 0.0609 | 0.0561 | 0.0578 | 0.0638 | | |

| 7 | 4.85 | 0.133 | 0.0618 | 0.0423 | 0.0359 | 0.0347 | 0.0364 | | |

| 8 | 5.55 | 0.118 | 0.0489 | 0.0306 | 0.024 | 0.0217 | 0.0216 | 0.0229 | |

| 9 | 6.24 | 0.105 | 0.0397 | 0.0228 | 0.0166 | 0.0141 | 0.0133 | 0.0135 | 0.0145 |

| 10 | 6.93 | 0.0952 | 0.0329 | 0.0174 | 0.0118 | 0.00943 | 0.00844 | 0.00819 | 0.00846 |

| 11 | 7.62 | 0.0869 | 0.0276 | 0.0136 | 0.00864 | 0.0065 | 0.00552 | 0.00513 | 0.00509 |

If you choose a starting array size and divide it by the number of elements you expect to have in your set, you can use the ratio to find the appropriate row on the chart. The values in the cells are the FP probability (0.0 to 1.0, typically notated as p, though not in this chart). You can choose the worst FP probability you think you can live with, follow that cell up to the top row and find the number of hash functions you'll need (k=). If you didn't find a p value you liked, you could move down a row and try again with a different m/n ratio until you are satisfied, then just use the ratio and your known n to calculate the correct number of bits m you'll need in your array.

It's actually pretty simple once you try it, but now that you know how to read the chart... well... you don't have to.

Unleash the Snake!

Back to my original problem: I wanted to build a Bloom filter out of the HIBP password hashes so I could quickly check potential passwords against their list without calling their API, and I wanted to do it efficiently. I figured a Bloom filter would be a nice solution, but how to actually do it in Python?

Populating a Filter

After trying a couple of different methods, I ended up using a module called

pybloom. It provides a dead simple mechanism for creating, using, saving and loading filters. Install it like so:

pip install pybloom-mirror

Here's the code I used to populate a Bloom filter from the HIBP database (saved in a file called pwned-passwords-ordered-2.0.txt)

#!/usr/bin/env python

from __future__ import print_function

from pybloom import BloomFilter

import sys

from tqdm import tqdm

# The exact number of hashes in the file, as reported by "wc -l"

NUM_HASHES=501636842

print("** Creating filter... ", end="")

f = BloomFilter(capacity=NUM_HASHES, error_rate=0.001)

print("DONE.")

print("** Reading hashes (this will take a LONG time)... ", end="")

# Flush output so we don't have to wait for the filter read operation

sys.stdout.flush()

# This is the actual number of lines in the file.

with tqdm(total=NUM_HASHES) as pbar:

with open("pwned-passwords-ordered-2.0.txt", "rb") as hashes:

for l in hashes:

(h,n) = l.split(':')

f.add(h)

pbar.update(1)

print("DONE.")

print("** Stored %d hashes." % len(f))

print("TEST: Hash is in filter: ", end="")

print("000000005AD76BD555C1D6D771DE417A4B87E4B4" in f)

print("TEST: Non-existant Hash found in filter: ", end="")

print("AAAABAA5555555599999999947FEBBB21FCDAA24" in f)

with open("filter.flt", "w") as ofile:

f.tofile(ofile)

To start off with, I just counted the number of lines in the file with wc -l and used that to set the NUM_HASHES variable. Then I created the BloomFilter() object, telling it both the number of elements to expect and the FP rate I was willing to tolerate (0.001 works out to 0.1%, or 1 FP out of every 1,000 queries).

See? I told you you didn't need that chart after all.

Populating the filter is just as easy as reading in the file line by line, splitting the hash out (it's in the first field) and adding it to the filter. My filter object is called f, so f.add(h) does the trick.

Just as a quick sanity check, I printed the value of len(f), which for a BloomFilter() actually returns the number of members of the original set, which Python considers it's length. Since I knew exactly how many members I added (the number of lines in the file), this matched up exactly with my expectations. If you the number of elements in your filter is a little more or a little less than the capacity parameter asked for, though, that's OK. If it's a lot different, or you don't know the final number of elements in advanced, pybloom also provides a ScalableBloomFilter() object that can grow as needed, but this wasn't necessary here.

After populating the filter, I did a couple of quick tests to see if specific elements were or were not in the list. I chose one from the file so I knew it'd be present and one that I made up entirely. I expect to find the first one, so "000000005AD76BD555C1D6D771DE417A4B87E4B4" in f should have evaluated to True. Conversely, I knew the other wasn't in the set, so "AAAABAA5555555599999999947FEBBB21FCDAA24" in f should have returned False. Both acted as expected.

Finally, I wrote the populated filter as a binary blob to the file filter.flt so I can use it again later. This is how I created that 860MB filter file I mentioned earlier. At this point, were I so inclined, I could delete the original password hash list and recover 29GB of space.

Loading a Filter from Disk

The next step is to load the populated filter back from disk and make sure it still works. Here's the code:

#!/usr/bin/env python

from __future__ import print_function

from pybloom import BloomFilter

print("** Loading filter from disk... ", end="")

with open("filter.flt", "rb") as ifile:

f = BloomFilter.fromfile(ifile)

print("DONE.")

print("** Filter contains %d hashes." % len(f))

print("TEST: Hash is in filter: ", end="")

print("000000005AD76BD555C1D6D771DE417A4B87E4B4" in f)

print("TEST: Hash not found in filter: ", end="")

print("AAAABAA5555555599999999947FEBBB21FCDAA24" in f)

Here, I'm really just testing that I can read it back in and get the same results, which I do. The only new part is this line: f = BloomFilter.fromfile(ifile), which reads the blob back into memory and creates a filter from it. I then repeat the original tests just to make sure I get the same results.

Bloom Filter Performance

After all this, you might be wondering how well the filter performs. Well, so was I. I have to say that creating the filter was pretty slow. On my laptop, the code took about 1hr 45m to run. Most of that, though, was overhead involved in reading a 29GB file line by line in order to avoid running out of RAM and splitting the result to isolate the password hash from the other field I wasn't using. Fortunately, reading and writing the filter on disk only took a few seconds.

Once I populated the filter, I used the following program to get some rough timings on actually using the thing:

#!/usr/bin/env python

from __future__ import print_function

from pybloom import BloomFilter

import timeit

REPETITIONS = 1000000

setup_str = """

from pybloom import BloomFilter

with open('filter.flt', 'rb') as ifile:

f = BloomFilter.fromfile(ifile)

"""

res = timeit.timeit(stmt='"000000005AD76BD555C1D6D771DE417A4B87E4B4" in f', setup=setup_str, number=REPETITIONS)

print("Avg call to look up existing hash: %fms." % ((res / REPETITIONS) * 1000))

res = timeit.timeit(stmt='"AAAABAA5555555599999999947FEBBB21FCDAA24" in f', setup=setup_str, number=REPETITIONS)

print("Avg call to look up non-existing hash: %fms." % ((res / REPETITIONS) * 1000))

Using Python's timeit module, I could easily run each test 1,000,000 times and compute an average time for membership tests. Bloom filters have a well-documented property that lookups are constant time no matter what specific value you are testing, so I didn't have to worry about using 1,000,000 unique values. I did, however, test "candidate exists in set" and "candidate does not exist in set" separately, and you can see why this was a good idea:

> ./time-filter.py

Avg call to look up existing hash: 0.006009ms.

Avg call to look up non-existing hash: 0.003758ms.

As you can see, the average test time for a legit member of the set was only 0.006ms, which is very quick indeed! Suspiciously quick, even, so if you find that I messed up the timings, drop me a comment and let me know!

If the candidate value was not part of the set, the lookup even quicker, at only 0.004ms (roughly 37% faster). I was surprised at first, because of that constant time lookup property I mentioned, but I quickly realized that the speedup is probably because of a short-circuit: Once any of the hash bits fails to appear in the bit array, we know for sure that the candidate is not a member of the set, and can stop before we complete all the hash operations. On average, candidates that don't exist only had to go through 63% of the hash/bit array checks, so of course those operations complete more quickly.

Conclusion

This was just a proof-of-concept, so things are a little rough and certainly not production-ready. However, just based on this short trial, I can say that I accomplished my goals:

- I can check whether a specific password was found in the HIBP database

- I don't have to send the password hashes to HIBP, nor will I overload their server with requests

- I can do it quickly, without storing or searching the entire 29GB corpus for each lookup

I have to live with two key limitations, though I'm quite happy to do so:

- The answer to "does this hash exist in the set" is subject to FPs. I choose what rate I'm comfortable with, but if I want to be certain, maybe I'd follow up with a call to the HIBP API when need to confirm a positive Bloom filter result. For my purposes, though, I can live with 0.1% FP rate.

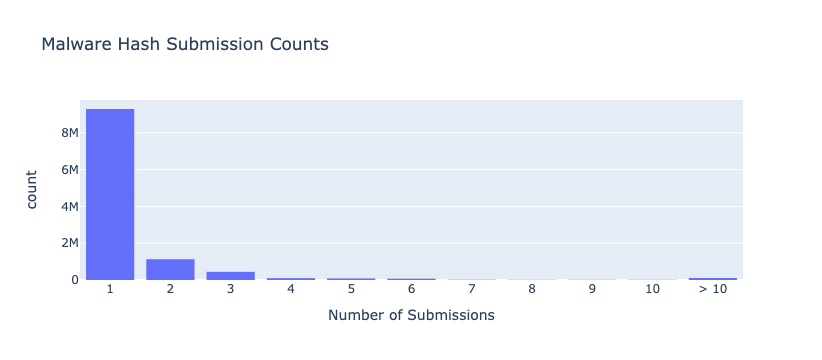

- I lose the second field in the HIBP database, which is the number of times the password was found in the breach corpus. This could be pretty useful if you consider that just finding a password once might be a different situation than finding it 30,000 times. Again, my application doesn't need that info, and I was just throwing that data away anyway, so no problem there.

Sadly, the built-in disk save/load file format provided by

pybloom is binary-only, and as far as I can tell, it's not guaranteed to be portable between machines of different architectures. This isn't a problem for me at all, but I read in

another of Troy Hunt's posts that hosting the download for 29GB files isn't cheap. Perhaps with some extra work there'd be a portable way to serialize the Bloom filter, such that one could offer the much smaller populated filter instead, and anyone who just wanted to do simple existence checking at scale could use that.

Still, I've showed that this kind of anonymous checking could be done, that it's efficient in terms of both storage and test speed, and quite easy to code. Success!